The Problem

Restaurant discovery is moving from search results to AI answers.

People are asking ChatGPT, Perplexity, and Google AI Overviews where to eat, what is worth it, what is good for kids, where to go before a game, and which places are not tourist traps.

But AI answers do not behave like traditional rankings. They change across engines, sessions, and repeated runs. They often return a short list, not a full market.

That means the useful question is not “Where do we rank?”

It's: Do we show up at all when someone asks for the kind of experience we sell?

That is the problem this study was built to measure.

Executive Summary

NOVA Brandworks studied how Boston’s North End food scene appears in AI search by analyzing 141 culinary businesses, capturing 78 AI-generated answers, reviewing 127 websites for technical visibility issues, and checking 116 websites for the phrases and signals they use to describe their offerings.

The headline finding was clear:

AI search compresses local dining markets.

Across the tested prompts, AI surfaced 53 of 141 eligible North End culinary businesses at least once. That means 88 eligible businesses, or 62%, never appeared in any of the captured AI answers.

This was not mainly a crawler-blocking problem. In the technical audit, 0 of 127 sites blocked the major AI search crawlers we checked. The bigger issue was whether AI could confidently understand, match, and cite each business for specific demand moments.

A restaurant could have strong reviews, a working website, and clear on-site positioning, and still be skipped when AI answered a high-intent prompt. A different restaurant could show up even when its website did not explicitly claim that use case, likely because AI was relying on reviews, citations, local lists, or other off-site signals.

For restaurant owners, the takeaway is uncomfortable but useful:

Your website can say you are great for date night, private events, vegan options, seafood, or pizza. AI may still not connect you to those searches.

Why The North End

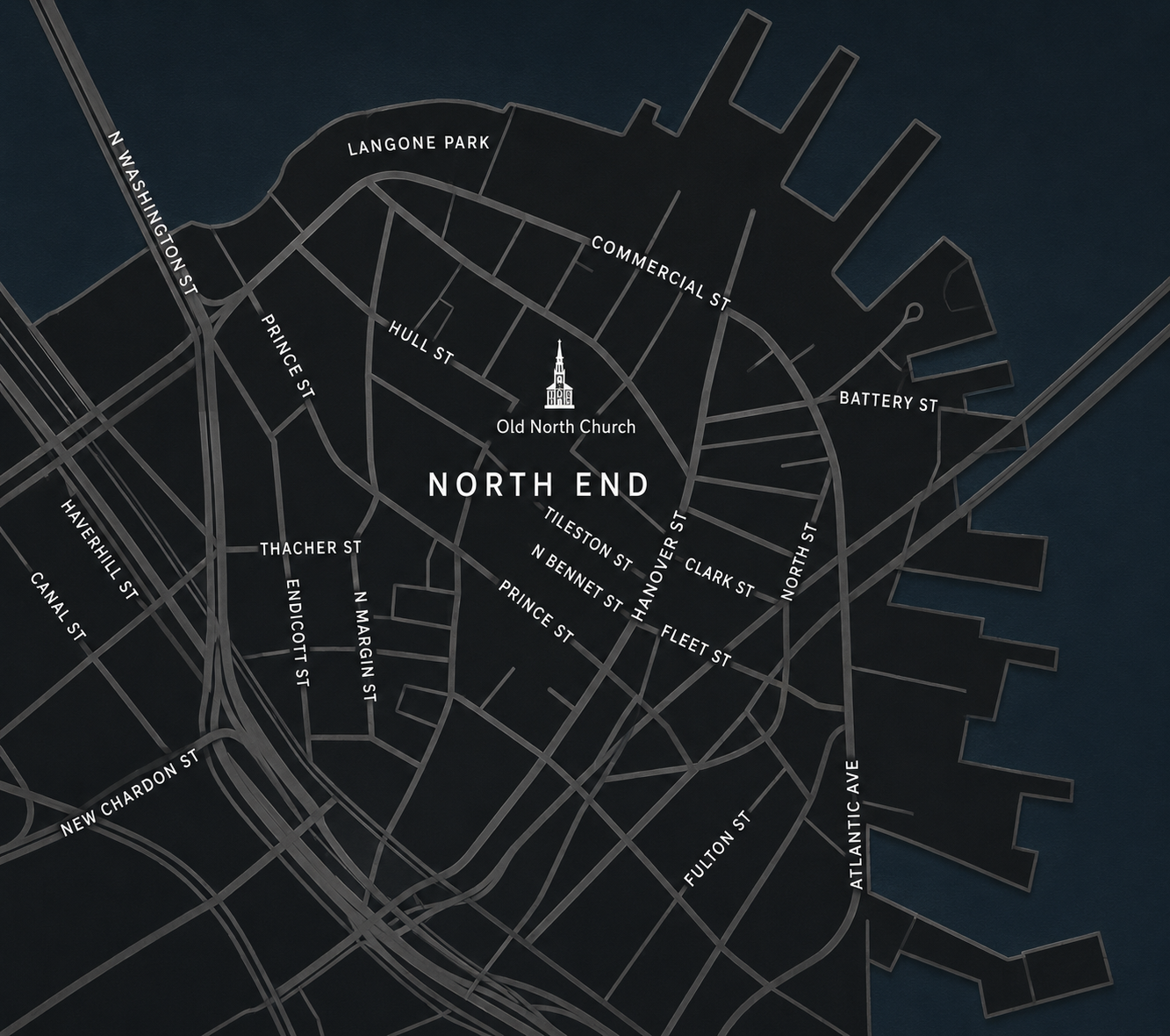

The North End is one of the most AI-search-relevant neighborhoods in Boston.

It's dense and it has a clear identity. It has tourists, locals, event traffic from TD Garden, destination bakeries, food tours, high-end Italian restaurants, pizza shops, seafood spots, bars, cafes, and family-owned institutions that have been around for decades.

It is exactly the kind of place people ask AI about:

- Where should I eat in the North End this weekend?

- What's the best cannoli in Boston?

- What restaurant is good for a date night?

- Where can I take kids?

- Who has vegan options?

- What is worth it and not a tourist trap?

That makes it a strong test market for AI visibility.

What We Studied

This study combined four data layers.

1. A North End culinary census

We started with 174 raw culinary businesses and cleaned the list down to 141 included businesses across restaurants, bars, bakeries, cafes, food tours, wine bars, and specialty food businesses.

Important denominator note: we are not calling all 141 businesses "restaurants." The full 141 is the North End culinary market, meaning the set of businesses AI can reasonably recommend for broad food-scene prompts. Inside that:

- Strict restaurants: 71 businesses categorized as restaurants. Of those, 60 are located in the core North End and 11 are in adjacent areas included in the study, such as Causeway, waterfront, or nearby North End food-scene spillover zones.

- Dining venues: 114 included businesses when restaurants, bars, cafes, and the wine bar are counted together.

- Core dining venues: 90 businesses when that same dining-venue definition is limited to the core North End.

For public reporting, we use "culinary businesses" for the full 141, "strict restaurants" for the 71 restaurant-category businesses, and "dining venues" when bars and cafes are part of the user choice set.

2. AI answer testing

We tested 13 real-world prompts across ChatGPT, Perplexity, and Google AI Overviews. Each prompt was run twice per engine, creating 78 total captures.

The prompt set was built around real dining decisions people ask AI to help with: where to eat in the North End, who makes the best cannoli, where to go for date night, which restaurants work for kids or big groups, who has the best pizza, the best seafood, handmade pasta, or vegan options, where to eat before a Celtics game, which spots are worth it and not tourist traps, and how to experience the North End food scene overall.

We measured the visibility percentage, not the ranking position. A business received credit when it appeared in an answer for a prompt where its category was eligible.

3. Technical AI access audit

We audited 127 websites for crawler access, homepage indexability, schema, sitemaps, llms.txt, menu links, reservation links, and platform signals.

4. Screaming Frog on-site positioning crawl

We crawled 116 matched audit-eligible websites to identify which businesses actually position themselves for high-value use cases like date night, private events, kids, vegan options, pizza, seafood, cannoli, handmade pasta, catering, and food tours.

That let us compare two things:

What the business says it offers vs. what AI actually recommends it for.

The expanded Screaming Frog pass is the source for the seafood, pizza, cannoli, pastry, dessert, and handmade pasta positioning counts used later in this brief. Those terms were added after the first custom-search pass so category-specific claims would not rely on proxies.

Research Context

This framing lines up with the KDD 2024 paper GEO: Generative Engine Optimization. The paper argues that generative engines change the search problem because they synthesize multiple sources into an answer instead of showing a simple list of blue links.

That matters for measurement. Traditional rank position is too brittle for this environment. Visibility has to be measured by whether a source, brand, or business appears in the generated answer at all, how often it appears across repeated runs, and whether the answer connects it to the right user intent.

SparkToro's 2026 research on AI recommendation consistency points in the same direction. Their study found that AI tools rarely return the same recommendation list twice, and ordered rankings are even less stable. But the research also found that repeated visibility percentage can still reveal which brands or entities sit inside an AI system's consideration set.

That is why this study does not claim to measure "AI rankings." We measure repeated visibility. Did the business appear? For which prompts? Across which engines? How often? And did AI connect it to the demand moment the business should reasonably own?

The GEO paper also supports a practical content point: evidence-rich and clearer writing performed better than old-school keyword stuffing. Citations, quotations, statistics, readability, and fluency improved visibility in the study; keyword stuffing did not.

That is how we treat technical optimization here. Schema, clean menus, reservation links, entity consistency, and crawlability matter. They are not magic. The goal is not to pull one lever and watch an AI ranking move. The goal is to make the business easier to understand, verify, and recommend across the places AI systems look.

Finding 1: AI Compresses The Market

AI surfaces only a handful of restaurants per prompt

Across the highest-intent North End prompts, AI compresses the eligible universe to 5 to 9 percent. Each row shows the share of eligible businesses that surfaced at least once.

AI answers did not spread attention evenly across the North End.

They repeatedly selected a small set of businesses, while most eligible businesses never surfaced.

Across the 78 captures, AI mentioned 53 of 141 eligible North End culinary businesses at least once. The remaining 88 businesses did not appear in any captured answer.

Some prompts were especially compressed:

This is the core strategic shift.

Traditional SEO rewards being somewhere on the page. AI search often behaves more like a concierge. It answers with a shortlist. If you are not in that shortlist, you may be invisible for that moment. For that reason, each prompt in this study uses its own eligible denominator instead of pretending every North End food business competes for every search.

AI search often behaves more like a concierge. It answers with a shortlist. If you are not in that shortlist, you may be invisible for that moment.

There was also a flip side: when AI had strong consensus, it was very stable. Neptune Oyster appeared as the top seafood recommendation in all 6 seafood captures. North End Boston Food Tour appeared as the top food-tour recommendation in 5 of 6 food-tour captures. Terramia appeared as the top vegan-options recommendation in 5 of 6 vegan captures.

That makes the absences more meaningful, because AI is not random noise. For some demand moments, it repeatedly converges on the same winners. The strategic question is why those businesses have enough signal to be chosen, while similar businesses do not.

When AI converges, it stays converged

For some demand moments, AI repeatedly returned the same business across engines and runs. The absence of compression in these cases makes the consensus more meaningful, not less.

Finding 2: On-Site Positioning Does Not Guarantee AI Visibility

Saying it on your site isn't enough

Many North End restaurants position for these use cases on their websites. AI surfaces far fewer. The gap is what AI doesn't trust from the website alone.

The Screaming Frog site crawl showed that many businesses are actively positioning for valuable use cases on their websites.

For example, among the 116 matched audit-eligible sites:

But AI did not reliably reward that positioning.

The clearest mismatch was big groups and private dining. Inside the big-group prompt denominator, 24 matched sites positioned for big group or private dining demand, but only 9 matched businesses surfaced in the big-group AI prompt. Nineteen businesses that positioned for the use case did not appear.

Seafood showed a similar compression pattern. Twenty-two matched restaurant sites positioned for seafood, oyster, or lobster language, but only 4 matched businesses surfaced in the seafood prompt.

Pizza was also compressed. Ten matched eligible restaurant sites positioned for pizza, but only 3 positioned businesses surfaced in the pizza prompt. Across the full eligible prompt universe, AI surfaced 4 pizza businesses total.

Cocktail positioning also did not translate into date-night visibility in this pilot. Thirteen matched sites positioned around cocktails, but none of those businesses surfaced in the date-night prompt.

The practical point:

Saying the right thing on your site is necessary, but it is not sufficient. AI has to understand, trust, and connect that claim to the user's prompt.

Finding 3: AI Often Infers Use Cases From Off-Site Signals

AI fills in the blanks from somewhere else

When sites don't position for a use case, AI infers it from reviews, citations, and aggregator data. Sometimes that helps. Sometimes it sends the customer somewhere else.

Some of the strongest findings came from the opposite pattern: businesses showed up in AI answers even when their websites did not clearly position for those use cases.

Family-friendly dining was the clearest example. Across all 116 matched websites, only 2 used clear family or kids positioning language. Among the matched restaurant websites eligible for the “good with kids” prompt, none used that language. Yet AI surfaced 10 matched restaurants when asked which North End restaurants are good with kids.

Vegan and dietary searches showed a similar pattern. Twelve matched sites positioned for vegan, vegetarian, or gluten-free options. But only 2 both positioned and surfaced. Six businesses surfaced without matching on-site dietary language.

That does not automatically mean AI made the recommendations up. It likely means AI was drawing from off-site sources: reviews, listicles, reservation platforms, local directories, maps data, niche directories, community threads, and aggregated customer language.

What AI Was Pulling From

In the dietary prompts, AI cited or surfaced sources that were not just restaurant websites. Examples included a Reddit thread about dairy-free and vegan Italian options in the North End, a Spinach Guide page for Terramia Ristorante, and a HappyCow listing for Antico Forno.

Those sources matter because they contain the exact kind of third-party language AI can use to connect a restaurant to a demand moment: vegan options, dairy-free dining, accommodating staff, labeled menu items, and North End context.

That does not prove any single source caused a recommendation, but it does show that AI visibility is shaped by more than what a restaurant says on its own site. Reviews, community threads, niche directories, and third-party profiles can all help define what AI thinks a business is known for.

Source Examples

For owners, this is a big deal.

If your reviews, third-party profiles, menus, and local citations do not reinforce the same story as your website, AI may form its own version of your positioning. Sometimes that helps, sometimes it sends the customer somewhere else.

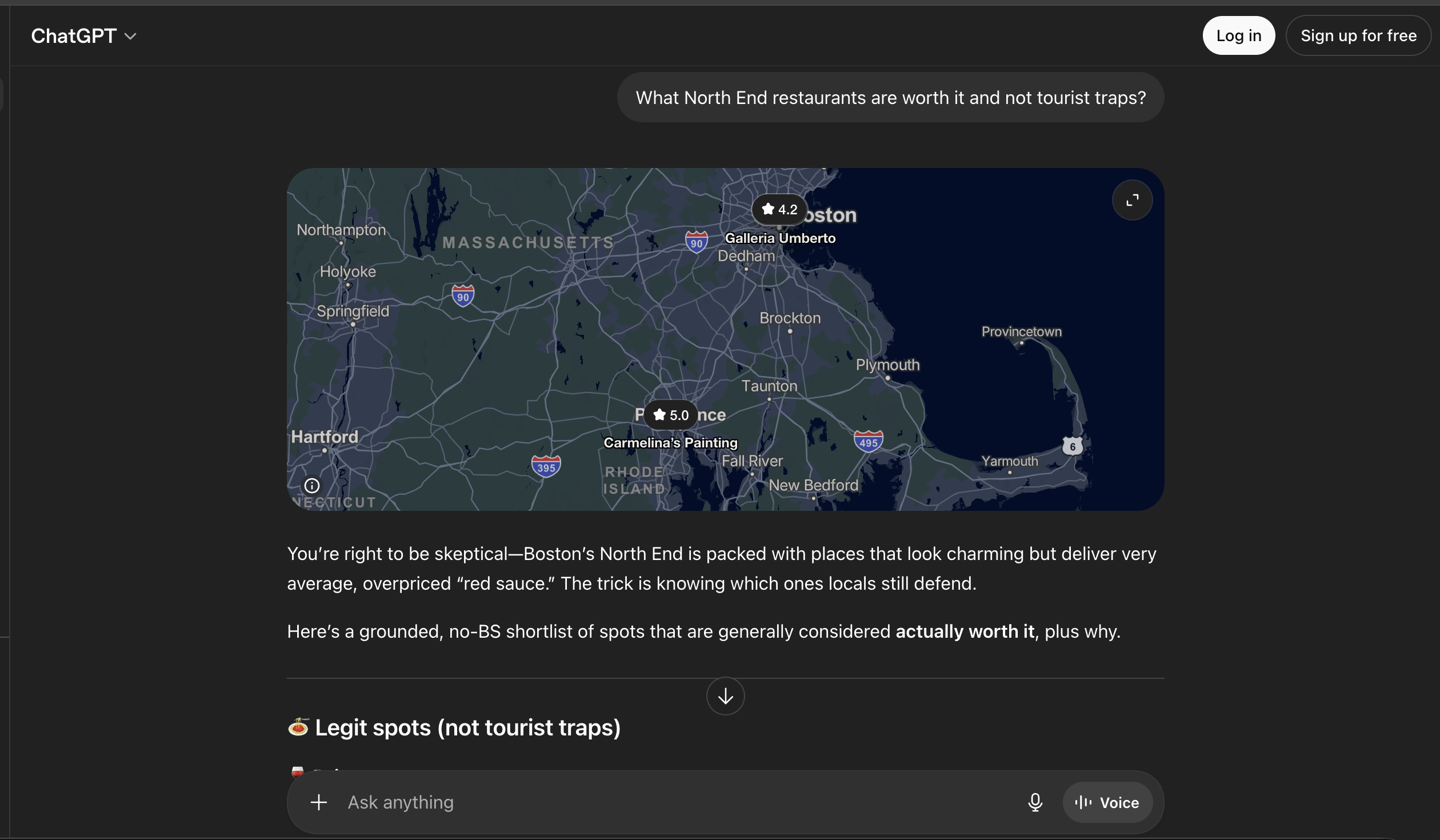

When AI Finds The Wrong Business

In multiple ChatGPT captures, Carmelina’s appeared with map context tied to a painting business in Pawtucket, Rhode Island, rather than the North End restaurant on Hanover Street.

Carmelina’s official site lists the business correctly at 307 Hanover Street in Boston’s North End. We did not find Pawtucket or painter references in its live homepage schema, which suggests the issue was an AI/entity-matching error rather than a visible website-address error.

The site does use generic LocalBusiness schema rather than more specific Restaurant schema. That does not prove the schema caused the mismatch, but it makes the example useful: AI visibility is not only about being mentioned. It is also about being matched to the right business, category, and location.

Finding 4: Technical Access Was Not The Main Problem

It's not a crawler problem

A common assumption is that businesses are invisible in AI because they are blocking AI bots.

That was not what we found in this dataset.

What's actually missing

Across 127 technical-audit-eligible sites:

- 0 blocked the major AI search crawlers we checked.

- 6 had homepage noindex issues.

- 97 had no LocalBusiness schema.

- 111 had no Restaurant schema.

- 77 had no homepage reservation link.

- 57 had no homepage menu link.

- 87 had no llms.txt.

The read: technical access looked like table stakes. The businesses that surfaced were indexable, and none blocked the major AI search crawlers we checked. But technical completeness did not explain the winners by itself.

LocalBusiness schema was more common among top-answer businesses than the full audit baseline. But Restaurant schema was not. llms.txt appeared at the same rate in top-answer businesses as it did across the full technical audit. Menu and reservation links were also roughly in line with the baseline.

The individual examples make the point clearer. Neptune Oyster had no Restaurant schema and no llms.txt, but appeared as the top seafood recommendation in all 6 seafood captures. Strega had one of the strongest technical setups in the pilot, including Restaurant schema, Menu schema, address, sameAs, a reservation link, llms.txt, and a sitemap, yet it received zero mentions in the formal captures.

The crawler-blocking finding matters because it changes the diagnosis.

For this market, the problem was not usually, "AI cannot access the site."

It was more often, "AI does not have a strong enough, consistent enough entity picture to choose this business for the right demand moments."

Schema, menu clarity, reservation signals, third-party citations, reviews, and page-level positioning are not magic levers by themselves. But together, they help AI systems understand what the business is, what it is known for, and when it should be recommended.

Finding 5: Small Categories Have Different Visibility Dynamics

Food tours behaved differently from broad restaurant categories.

The best food tour prompt had a much smaller eligible universe: 6 businesses. AI surfaced 4 of them, for 66.7% market coverage.

That does not mean food tours are automatically better optimized than restaurants. It means the market is smaller and easier for AI to compress without excluding as many legitimate options.

This is an important reporting point.

AI visibility should be judged against the size and shape of the competitive universe. A 20% visibility rate may be strong in one category and weak in another.

What This Means For Restaurant Owners

AI search is not just another rankings dashboard.

For hospitality businesses, it is closer to a real-time recommendation layer. The engine is deciding which businesses fit the user's situation: date night, kids, dietary needs, big group, late-night dessert, pizza, seafood, food tour, pre-game dinner, or local favorite.

That means the work is not only technical SEO.

The work is entity clarity.

- Can AI tell what you are?

- Can it tell what you are best for?

- Can it find the same story on your website, your menu, your reviews, your reservation platform, your Google profile, and trusted third-party sources?

- Can it distinguish you from the restaurant down the street?

- Can it recommend you without guessing?

If the answer is no, you do not have an AI ranking problem.

You have an AI visibility problem.

What Restaurants Should Fix First

Based on the pilot, the first fixes should be practical and demand-specific.

1. Build pages or sections around real customer moments

Do not only describe the restaurant generically. Make the important use cases explicit: date night, groups, private dining, family dinner, vegan options, gluten-free options, seafood, handmade pasta, pizza, cannoli, late-night, pre-game dining, catering, and food tours where relevant.

2. Strengthen Restaurant and LocalBusiness schema

Most sites in the audit were missing basic structured data. Schema should reinforce address, phone, hours, menu, reservation links, sameAs profiles, cuisine, price range, and business type.

3. Make menus and reservation paths easy to parse

A menu hidden in an image, PDF, or third-party widget is harder to use as evidence. AI systems need clean, crawlable information about what you serve and how customers book.

4. Align third-party profiles with the same positioning

If the website says "private dining" but reviews, reservation platforms, and local profiles do not, AI may not connect the business to group-dining prompts. The same applies to vegan, family-friendly, seafood, pizza, and date-night searches.

5. Track visibility percentage, not rank position

AI answers are variable. Rank position can change from run to run. The more useful metric is how often a business appears across a controlled prompt set, engines, and repeated runs.

The Strategic Takeaway

The North End study points to a new kind of local market share: recommendation presence.

Not revenue share.

Not Google rank.

Not who has the best-looking website.

The question is simpler:

When someone asks AI where to go, are you in the answer?

For broad prompts, only a small slice of the North End food scene appeared. For date night, the list got even smaller. For vegan options, AI pulled from sources beyond restaurant websites. For big groups, many businesses that market private dining still did not appear.

That is the opening.

Most restaurants are not thinking about this yet. They are treating AI search like a novelty, a rankings report, or a technical SEO issue.

But AI is already shaping consideration. The businesses that win will be the ones that make their best use cases clear everywhere AI looks: their website, menu, reviews, reservation profiles, local coverage, and community mentions.

Methodology Note

How we measured AI visibility

Four data layers: a cleaned culinary census, prompt-driven AI captures across three engines, a technical access audit, and a Screaming Frog positioning crawl.

Data current as of May 6, 2026. This is a pilot research brief, not a final statistical benchmark for the entire neighborhood.

The study used a cleaned census of 141 included North End culinary businesses, including 71 strict restaurants and 114 dining venues when restaurants, bars, cafes, and the wine bar are grouped together. It also used 13 prompts, 3 AI search experiences, 2 runs per prompt, a 127-site technical audit, and a Screaming Frog site crawl of 116 matched audit-eligible websites.

The findings should be read as directional evidence about AI visibility patterns, market compression, and positioning mismatch. They should not be read as a permanent ranking of North End businesses.

AI answers change, that is exactly why visibility percentage is a better metric than rank position.

Sources Referenced

- Aggarwal, Pranjal, et al. GEO: Generative Engine Optimization. arXiv, accepted to KDD 2024.

- Fishkin, Rand. NEW Research: AIs are highly inconsistent when recommending brands or products; marketers should take care when tracking AI visibility. SparkToro, January 27, 2026.

Get Your AI Visibility Scorecard

Find out where your restaurant stands

A private AI Visibility Scorecard for your business. Five questions answered, one prioritized fix list.

Book a 15-minute AI Visibility Review →

NOVA Brandworks is turning this research into private AI Visibility Scorecards for restaurants, bars, bakeries, cafes, and food businesses.

Each scorecard answers five questions:

- Do you appear when people ask AI for your category?

- Which prompts and engines mention you?

- Which competitors appear instead?

- What does AI seem to understand or misunderstand about your business?

- What should you fix first to improve visibility?

If your restaurant depends on tourists, locals, event traffic, private dining, reservations, reviews, or word-of-mouth discovery, AI search is already part of your customer journey.

The question is whether you are showing up.

.avif)